Parallel I/O

As the data sets we process with ParaView get bigger, we are finding that a significant portion of the wait time in ParaView is I/O for many of our users. We had originally assumed that moving our clusters to parallel disk drives with greater overall read performance would largely fix the problem. Unfortunately, in practice we have found that often times our read rates from the storage system are far below its potential.

This document analyzes the parallel I/O operations performed by VTK and ParaView (specifically reads since they are by far the most common), hypothesizes on how these might impede I/O performance, and proposes a mechanism that will improve the I/O performance.

File Access Patterns

VTK and ParaView, by design, support many different readers with many different formats. Rather than iterate over every different reader that is or could be, we categorize the file access patterns that they have here. We also try to identify which readers perform which access patterns. Readers that do not read in parallel data will not be considered for obvious reasons.

Common File

There are some cases where all the processes in a parallel job will each read in the entire contents of a single file. Although the parallel data readers are usually smart enough to only read in the portion of the data that they need. However, there is usually a collection of "metadata" that all processes require to read in the actual data. This metadata includes things like domain extents, number formats, and what data is attached. Oftentimes this metadata is all packaged up into its own file (along with pointers to #Individual Files that hold the actual data).

In this case, every process independently reads the entire metadata file because all the readers are designed to perform reads with no communication amongst them. This is so that the readers will also work in a sequential parallel read mode where data is broken up over time rather than across processors and there are no other processors with which to collaborate.

Why it is inefficient:

The storage system is being bombarded with a bunch of similar read requests at about the same time. All the requests are likely to be near each other (with respect to offsets in the file) and hence on the same disk. Unless the read requests are synchronized well and the parallel storage device has really good caching that works across multiple clients (neither of which is very likely), then storage system will be forced to constantly move the head on the disk to satisfy all the incoming requests without starving any of them. Moving the head causes a delay measured in milliseconds, which slows the reading to a crawl.

What readers do this:

All the readers that have a metadata file use this access pattern. This includes the PVDReader as well as all of the XMLP*Readers and the pvtkfile (partitioned legacy VTK files). The EnSight server of servers file is also a metadata file that points to individual case files and must be read in by (or distributed to) all processes. The XDMF format also comprises a metadata file that describes the data in an HDF5 file.

Although these metadata files are almost always small, especially when compared with the actual data, reading these files can be surprisingly slow. For example, I recently observed a 64 process job take several minutes to read in a small (~7KB) pvd metadata file. In contrast, the same 64 process could read in 1.2GB of actual data in less than 10 seconds.

Monolithic File

Data formats that handle small to medium sized data are almost always contained in a single file for convenience. If these data formats can be scaled up, they often are. For example, raw image data can be placed in an image file of any size. Although generating this large single file data often requires some dedicated processing, it is sometimes done for the convenience and highest usability.

For this type of file to be read into ParaView in a parallel environment, it is important that the reader be able to read in a portion of the data. For example, the raw data image reader accepts extents for the data, and then reads in only the parts of the file associated with those extents.

Why it is inefficient:

Like in the case of the #Common File, we have a lot of read accesses happening more-or-less simultaneously to a single file because each process is trying to read its portion of the data. However, in this case the problems are compounded because each process is reading a different portion of the data. Thus, whatever caching strategy the I/O system is implementing has little to no chance of providing multiple requests with a single read.

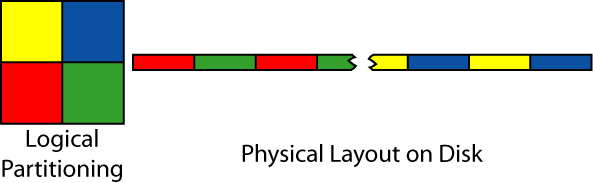

To make matters worse, the reads are often set up to minimize I/O performance. The reason for this is that even although extents are logically cohesive, the pieces are actually scattered over various portions of the disk. For example, in the image below, a 2D image is divided amongst four processors. Although each piece is contiguous in two dimensions, each partition is scattered in small pieces throughout the linear file on disk.

So, in practice, the storage system is bombarded by many requests of short segments of data. With no a-priori knowledge, the storage is required to fulfill the requests in a more-or-less ordered manner so that no process gets starved. To satisfy the these requests, the disk is forced to move it's reading head back and forth, thereby slowing the read down to a crawl.

What readers do this:

Any reader that can read sub-pieces from a single file applies into this category. The raw image data format most completely applies as it contains no meta data and is completely divided amongst the loading processes.

There are also many data formats that contain some meta data, which is loaded in the manner described in the #Common File, but the vast majority of the file is actual data that is divided amongst processors. These readers include all of the XML*Readers (serial, not parallel versions), legacy VTK format reader, and STL reader. The HDF5 format also falls in this catagory, although the layout is something of a black box to us.

Individual Files

Often times large amounts of data are split amongst many files. Sometimes this is because the original data format does not scale, but most times it is simply convenient. Large data sets are almost always generated by parallel programs, and it is easy and efficient for each process in the original parallel job to write out its own file.

How the individual files are divided amongst processors varies from reader to reader. If there are many more files than processors, then the sharing is minimal. For smaller numbers of larger files, the amount of sharing obviously becomes more likely.

Why it is inefficient:

Usually it is not. Having each process read from its own independent files means that individual reads are usually longer (providing the storage system with easier optimization) and that individual files are more likely to be loaded from individual disks.

Of course, ParaView is at the mercy of how the files were written to the storage system. It could be the case that files are distributed in a strange stripped manner that encourages many short reads from a single disks. But this situation is less likely than a more organized layout.

There is also the circumstance that multiple processors still access a single file. This opens the same issues discussed in #Monolithic File. However, in this situation we have reduced the number of processors accessing an individual file, so the effects are less dramatic.

What readers do this:

The Exodus reader is the purest example of this form. Not only is the meta data repeated for each file, but this particular reader is designed such that no two processors read parts from the same file. However, the current implementation has all processes reading metadata (which is replicated in all of the files) from a single file. This is not strictly necessary (and in fact a bad idea).

The rest of the file formats incorporating multiple files also have a central metadata file associated with them. These are listed in the #Common File section.

Parallel I/O Layer

Before we are able to optimize our readers for parallel reading from parallel disk, we need an I/O layer that will provide the basic essential tools for doing so. This layer will collect multiple reads from multiple processes and combine them into larger reads that can be streamed from disk more efficiently and distributed to the processes.

There exist several implementations of such an I/O layer, and we certainly do not want to implement our own from scratch. A good choice is the I/O layer that is part of the MPI version 2 specification and is available on most MPI distributions today. Due to its wide available and efficency, this is the layer that we would like to use.

However, ParaView must work in both serial and parallel environments. This means that MPI (and hence its I/O layer) is not always available. One way around this is to have multiple versions of each reader: one that reads using standard I/O and one optimized for parallel reads. Unfortunatly, this approach would lead to a maintanance nightmare causing more bugs and worse support.

Instead, we will build our own I/O layer which is really just a thin veneer over the MPI I/O layer. When MPI is available, we will use the MPI library. When it is not, we will swap out the layer for something that simply calls POSIX I/O functions.

- This makes a lot of sense. However, how will this work with readers that rely on 3rd party libraries? XDMF uses hdf5, Exodus uses netCDF. I am guessing implementing a reader that reads raw hdf5 or netCDF format would be a nightmare. This would probably work with the VTK file formats because they all use C++ streams to do IO. We could create an appropriate subclass that works with our IO layer. Actually, it may make sense to base this IO layer on streams (as long as we are only targeting C++). Berk 10:33, 8 Feb 2007 (EST)

Parallel I/O Layer Interface

MPI 2 I/O Implementation

POSIX I/O Implementation

Optimized Parallel Readers

Open Issues

This section captures the issues we anticipate in implementing the proposed system.

Third Party Libraries

Some file formats are simple enough to implement directly with code. Other formats were developed "in-house" as native VTK files. The rest of the formats rely on 3rd party libraries. When dealing with a 3rd party library, we are severely limited in the changes we can make on how we read the data. It is not feasible to make changes to the 3rd party library because it would soon become impossible to update to future versions of the library. Implementing and supporting readers that read the raw file data would be a nightmare.

So for many of the parallel I/O issues, we simply have to place ourselves in the developers of the third party library. However, there are a few things that we can do to better our situation.

- Enable native parallel I/O

- Assuming that we are dealing with a file format designed for parallel I/O, and the format is popular enough to justify having a ParaView reader, then it stands to reason that at least reasonably good at reading parallel I/O files. It may be just a matter of enabling those features. For example, the library may have a parallel read mode that needs to be enabled. It may also require something like an MPI communicator that we could hopefully get from the VTK parallel I/O layer.

- Minimize potential for poor access

- Sure, we're stuck with the API implementation given, but we don't have to be stupid with how we use it. There may be some easy things we can do to minimize poor I/O access patterns. For example, rather than have all processes try to read the metadata from the same file at about the same time, read it in one process and distribute it.

- Feedback

- If all else fails, complain to the 3rd party. If they are serious about having a good and well used file format, they should be motivated to fix the problems. Failing that, we could simply discourage people from using that kind of file. Users probably won't need much motivation.