Parallel I/O

As the data sets we process with ParaView get bigger, we are finding that a significant portion of the wait time in ParaView is I/O for many of our users. We had originally assumed that moving our clusters to parallel disk drives with greater overall read performance would largely fix the problem. Unfortunately, in practice we have found that often times our read rates from the storage system are far below its potential.

This document analyzes the parallel I/O operations performed by VTK and ParaView (specifically reads since they are by far the most common), hypothesizes on how these might impede I/O performance, and proposes a mechanism that will improve the I/O performance.

File Access Patterns

VTK and ParaView, by design, support many different readers with many different formats. Rather than iterate over every different reader that is or could be, we categorize the file access patterns that they have here. We also try to identify which readers perform which access patterns. Readers that do not read in parallel data will not be considered for obvious reasons.

Common File

There are some cases where all the processes in a parallel job will each read in the entire contents of a single file. Although the parallel data readers are usually smart enough to only read in the portion of the data that they need. However, there is usually a collection of "metadata" that all processes require to read in the actual data. This metadata includes things like domain extents, number formats, and what data is attached. Oftentimes this metadata is all packaged up into its own file (along with pointers to #Individual Files that hold the actual data).

In this case, every process independently reads the entire metadata file because all the readers are designed to perform reads with no communication amongst them. This is so that the readers will also work in a sequential parallel read mode where data is broken up over time rather than across processors and there are no other processors with which to collaborate.

Why it is inefficient:

The storage system is being bombarded with a bunch of similar read requests at about the same time. All the requests are likely to be near each other (with respect to offsets in the file) and hence on the same disk. Unless the read requests are synchronized well and the parallel storage device has really good caching that works across multiple clients (neither of which is very likely), then storage system will be forced to constantly move the head on the disk to satisfy all the incoming requests without starving any of them. Moving the head causes a delay measured in milliseconds, which slows the reading to a crawl.

What readers do this:

All the readers that have a metadata file use this access pattern. This includes the PVDReader as well as all of the XMLP*Readers and the pvtkfile (partitioned legacy VTK files). The EnSight server of servers file is also a metadata file that points to individual case files and must be read in by (or distributed to) all processes. The XDMF format also comprises a metadata file that describes the data in an HDF5 file.

Although these metadata files are almost always small, especially when compared with the actual data, reading these files can be surprisingly slow. For example, I recently observed a 64 process job take several minutes to read in a small (~7KB) pvd metadata file. In contrast, the same 64 process could read in 1.2GB of actual data in less than 10 seconds.

Monolithic File

Data formats that handle small to medium sized data are almost always contained in a single file for convenience. If these data formats can be scaled up, they often are. For example, raw image data can be placed in an image file of any size. Although generating this large single file data often requires some dedicated processing, it is sometimes done for the convenience and highest usability.

For this type of file to be read into ParaView in a parallel environment, it is important that the reader be able to read in a portion of the data. For example, the raw data image reader accepts extents for the data, and then reads in only the parts of the file associated with those extents.

Why it is inefficient:

Like in the case of the #Common File, we have a lot of read accesses happening more-or-less simultaneously to a single file because each process is trying to read its portion of the data. However, in this case the problems are compounded because each process is reading a different portion of the data. Thus, whatever caching strategy the I/O system is implementing has little to no chance of providing multiple requests with a single read.

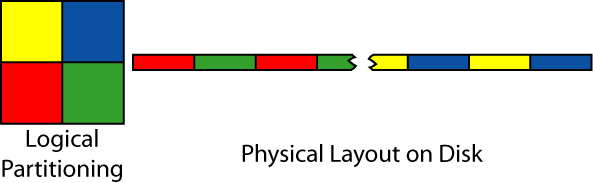

To make matters worse, the reads are often set up to minimize I/O performance. The reason for this is that even although extents are logically cohesive, the pieces are actually scattered over various portions of the disk. For example, in the image below, a 2D image is divided amongst four processors. Although each piece is contiguous in two dimensions, each partition is scattered in small pieces throughout the linear file on disk.

So, in practice, the storage system is bombarded by many requests of short segments of data. With no a-priori knowledge, the storage is required to fulfill the requests in a more-or-less ordered manner so that no process gets starved. To satisfy the these requests, the disk is forced to move it's reading head back and forth, thereby slowing the read down to a crawl.

What readers do this:

Any reader that can read sub-pieces from a single file applies into this category. The raw image data format most completely applies as it contains no meta data and is completely divided amongst the loading processes.

There are also many data formats that contain some meta data, which is loaded in the manner described in the #Common File, but the vast majority of the file is actual data that is divided amongst processors. These readers include all of the XML*Readers (serial, not parallel versions), legacy VTK format reader, and STL reader. The HDF5 format also falls in this catagory, although the layout is something of a black box to us.

Individual Files

Often times large amounts of data are split amongst many files. Sometimes this is because the original data format does not scale, but most times it is simply convenient. Large data sets are almost always generated by parallel programs, and it is easy and efficient for each process in the original parallel job to write out its own file.

How the individual files are divided amongst processors varies from reader to reader. If there are many more files than processors, then the sharing is minimal. For smaller numbers of larger files, the amount of sharing obviously becomes more likely.

Why it is inefficient:

Usually it is not. Having each process read from its own independent files means that individual reads are usually longer (providing the storage system with easier optimization) and that individual files are more likely to be loaded from individual disks.

Of course, ParaView is at the mercy of how the files were written to the storage system. It could be the case that files are distributed in a strange stripped manner that encourages many short reads from a single disks. But this situation is less likely than a more organized layout.

There is also the circumstance that multiple processors still access a single file. This opens the same issues discussed in #Monolithic File. However, in this situation we have reduced the number of processors accessing an individual file, so the effects are less dramatic.

What readers do this:

The Exodus reader is the purest example of this form. Not only is the meta data repeated for each file, but this particular reader is designed such that no two processors read parts from the same file. However, the current implementation has all processes reading metadata (which is replicated in all of the files) from a single file. This is not strictly necessary (and in fact a bad idea).

The rest of the file formats incorporating multiple files also have a central metadata file associated with them. These are listed in the #Common File section.

Parallel I/O Layer

Before we are able to optimize our readers for parallel reading from parallel disk, we need an I/O layer that will provide the basic essential tools for doing so. This layer will collect multiple reads from multiple processes and combine them into larger reads that can be streamed from disk more efficiently and distributed to the processes.

There exist several implementations of such an I/O layer, and we certainly do not want to implement our own from scratch. A good choice is the I/O layer that is part of the MPI version 2 specification and is available on most MPI distributions today. Due to its wide available and efficency, this is the layer that we would like to use.

However, ParaView must work in both serial and parallel environments. This means that MPI (and hence its I/O layer) is not always available. One way around this is to have multiple versions of each reader: one that reads using standard I/O and one optimized for parallel reads. Unfortunatly, this approach would lead to a maintanance nightmare causing more bugs and worse support.

Instead, we will build our own I/O layer which is really just a thin veneer over the MPI I/O layer. When MPI is available, we will use the MPI library. When it is not, we will swap out the layer for something that simply calls POSIX I/O functions.

- This makes a lot of sense. However, how will this work with readers that rely on 3rd party libraries? XDMF uses hdf5, Exodus uses netCDF. I am guessing implementing a reader that reads raw hdf5 or netCDF format would be a nightmare. This would probably work with the VTK file formats because they all use C++ streams to do IO. We could create an appropriate subclass that works with our IO layer. Actually, it may make sense to base this IO layer on streams (as long as we are only targeting C++). Berk 10:33, 8 Feb 2007 (EST)

- Good point on the 3rd party libraries. I added a section to #Open Issues that address this. I'm not sure how well the stream implementation will work. It could get very messy (i.e. deadlocked) stream access are supposed to be collective. I don't think it will work very well with the MPI-2 I/O. Lee Ward mentioned some code he was working on that did distributed caching. Maybe that would fit the streams better.

- --Ken 18:42, 8 Feb 2007 (EST)

Parallel I/O Layer Interface

The base class of the parallel I/O layer is called vtkParallelIO. It is an abstract class that is subclassed for each implementation of the parallel I/O system. The vtkParallelIO class itself does not actually do any I/O with respect to file reads and writes. Rather, it acts like a factory that creates vtkParallelFiles, file handle objects. The vtkParallelFile is also an abstract class, this time with the facilities to open, close, read, and write files.

- Are these good choices for classnames? Is

vtkParallelIOgoing to be confusing because 'l' and 'I' look similar in many fonts? IsvtkParallelFilea misnomer? It's not the file that is necessarily parallel but rather the access. WouldvtkParallelIOFilebe more descriptive? Is it worth being harder to type? - --Ken 15:08, 9 Feb 2007 (EST)

vtkParallelIO

The definition for vtkParallelIO goes more or less like this:

class vtkParallelIO : public vtkObject

{

public:

vtkTypeRevisionMacro(vtkParallelIO, vtkObject);

static vtkParallelIO *New();

virtual void PrintSelf(ostream &os, vtkIndent indent);

static vtkParallelIO *GetGlobalParallelIO();

static void SetGlobalParallelIO(vtkParallelIO *parallelIO);

virtual vtkMultiProcessController *GetController() = 0;

virtual vtkParallelFile *CreateFileHandle();

virtual vtkParallelFile *CreateFileHandle(vtkMultiProcessController *controller);

};

As can be seen, the major features of this class are the global object, the controller, and the file handle creation.

Global Parallel I/O Object

The vtkParallelIO class maintains a single global instance of itself. This provides a system-wide default to use for parallel I/O. The intended use is for each VTK component that potentially performs I/O in a parallel setting has an ivar that holds a vtkParallelIO object. These ivars should be initialized with vtkParallelIO::GetGlobalParallelIO. This allows an application to set a global-wide parallel I/O solution with vtkParallelIO or to maintain a different parallel I/O solution for each reader or writer by setting the ivar on individual VTK objects.

Parallel I/O Controller

Each vtkParallelIO object necessarily has its own controller object that is tied to the algorithm used for parallel I/O. Having a copy of this controller may be useful for performing communication and synchronization outside of that provided by the parallel I/O system. It also provides a convenient way for a reader to determine if it is being called in streaming or data parallel mode.

File Handle Creation

As stated previously, the main function of vtkParallelIO is to act as a factory for parallel I/O file handles. The CreateFileHandle does this by creating a new vtkParallelFile that implements the correct parallel I/O functions for the chosen parallel I/O layer. The returned object needs to be deleted by the calling code.

There is a special version of CreateFileHandle that takes in a controller that will be used by the created parallel file. The default controller used is the same one as held by the vtkParallelIO object. Setting a different controller in the created file handle allows the calling code to do things like access a file with a subset of processors in the overall system. There may be limits on the controller used. For example, a vtkParallelIO implemented using MPI will refuse any controller that is not a vtkMPIController.

vtkParallelFile

The declaration of vtkParallelFile goes more or less like this:

class vtkParallelFile : public vtkObject

{

public:

vtkTypeRevisionMacro(vtkParallelFile, vtkObject);

virtual void PrintSelf(ostream &os, vtkIndent indent);

virtual int Open(const char *filename, int amode) = 0;

virtual void Close() = 0;

virtual int Read(void *buf, int count) = 0;

virtual int ReadAll(void *buf, int count) = 0;

virtual int Write(void *buf, int count) = 0;

virtual int WriteAll(void *buf, int count) = 0;

virtual off_t GetPosition() = 0;

virtual void Seek(off_t offset, int whence) = 0;

virtual void SetViewToAll() = 0;

virtual void SetViewToSubArray(int numDims,

int *arrayOfSizes,

int *arrayOfSubSizes,

int *arrayOfStarts) = 0;

virtual vtkMultiProcessController *GetController() = 0;

virtual int IsOpen() = 0;

virtual off_t GetFileSize() = 0;

};

We can clearly see that vtkParallelFile allows us to do basic file manipulation: open/close, read/write, and some simple queries. The concepts are familiar with anyone experienced with POSIX I/O (i.e. anyone who knows C or C++). In addition, we have added collective read/writes and views to the list of operations.

Open and Close

vtkParallelFile has the obvious Open and Close methods. Because these files are accessed parallel, both of these methods are collective. That is, every process in the multi process controller must call the function before it can complete. All processes must call Open with the same arguments.

The access mode parameter (amode) is an or of a familiar looking set of flags: VTK_MODE_RDONLY, VTK_MODE_WRONLY, VTK_MODE_RDWR, VTK_MODE_CREATE, VTK_MODE_EXCL, VTK_MODE_APPEND, and VTK_MODE_TRUNC.

- One think that is missing here is passing hints to the I/O library. MPI 2 comes with a set of predefined hints that I guess could be useful. I'm just not sure how to expose that. I'm thinking of using a

vtkInformationto store a set of supported hints, but it's kind of a pain to set up all the information keys. Maybe I'll be lazy and just wait for a situation where the parallel I/O won't work well without a hint. - --Ken 18:20, 9 Feb 2007 (EST)

Read and Write

Every process has its own local file pointer that it uses to read and write. This is basically equivalent what you would have if you simply called the POSIX fopen on all of the processes. Furthermore, the Read and Write methods perform the same kind of file access + file pointer move that you would expect from fread and fwrite.

The new features here are the ReadAll and WriteAll collective methods. Like their non-collective counterparts, they again perform the same file access + pointer move operation. The difference is that internally the reads and writes from the separate files will be collected. If possible, the I/O system will read in data with fewer, larger reads and then distribute the data to the appropriate processes. In essence, they organize multiple reads or writes into a single one. Because these are collective, they need to be called on all processes before the call can complete.

A major component of MPI 2 reads and writes is the ability to perform the operations on a specific data type. Although the writers may be able to take advantage of this (and we don't care much about the writers), the readers usually read things on the byte level even if there are larger data types because they still have to contend with things like endian conversion. Although the MPI 2 can handle automatic conversion, there does not seem to be a way to control the native data types in the file.

Views

Another feature of vtkParallelFile is its ability to establish a view on the file. A view is basically a mask placed over the file that defines the parts of the file that a given process will read. This allows, for example, the application to read an array distributed over multiple parts of a file in a single read.

When performing a normal read operation, this optimization does not help much. The head on a disk will still have to skip over parts of the disk to pick up all the pieces. Where this becomes useful is when performing a collective read. Each process can establish its own view of the file, and combined these views may cover a larger contiguous section of the file. When the collective read is performed, the read can stream data of the disk in the most efficient way possible.

Queries

Finally, there are a few methods to get information about the state of the file. For reference, you can get the controller that is managing the parallel I/O. You can also check to see if the file is open or how big the file currently is.

MPI 2 I/O Implementation

The MPI versions of the vtkParallelIO and vtkParallelFile subclasses are straightforward to implement. The pure virtual methods in vtkParallelFile have a clear mapping to MPI_FILE functions.

There are several features of MPI I/O that are not implemented in vtkParallelFile. Some notable omissions are explicit offsets, shared file pointers, nonblocking operations, data types, and most file view specifications. These omissions are intentional because everything that we add, we need to make sure we implement it correctly for the abstract interface and POSIX implementation. Many of these features are difficult to create and maintain. Instead, we are going for a 90% solution. That is, our feature set will be sufficient 90% of the time. To get something useful we need an implementation that is tractable, and 90% is a lot better than 0%.

POSIX I/O Implementation

The POSIX interface will work on the assumption that a process is reading a file independently. The vtkParallelIO will use the vtkDummyController, which fakes a parallel job with one process. With this assumption, the collective aspect of reads and writes is meaningless. The POSIX implementation can still be used when the read is actually parallel, but the read may become much less efficient.

The majority of the methods in vtkParallelFile have a direct mapping to the POSIX I/O functions. The two exceptions are the collective operations and the views. The collective operations can simply be mapped to the normal POSIX I/O operations since there is only one process.

The views are a different story. The POSIX implementation will have to make the appropriate adjustments to the file position and break up reads and writes accordingly. This will probably be the most time consuming part of the implementation (not counting modifying the readers). For this reason, we limited the views to only those that are most likely to be needed.

Optimized Parallel Readers

This section captures the general approach we plan to take in improving the parallel readers given the I/O layer previously described.

Exodus

Because the Exodus reader reads from parallel files, and in fact enforces this, the Exodus reader is fairly efficient at reading the parallel files, assuming they were written to the I/O device in in a way that allows us to efficiently read them back in parallel.

We are continuing to look at this reader, however, for better improvements. Right now we are probably reading metadata in poorly. The worst offence is that all processes initially read in the metadata from a single file. We can improve that by getting the controller from the parallel I/O layer, reading the metadata in the root node, and then broadcasting the information to everyone else. There may also be an issue with reading metadata multiple times. We will look into this.

PVD

A PVD file is basically a metadata file that points to other VTK files. Right now the metadata file is being independently streamed in by every process. We will change that to be read into a buffer using a collective read. That should allow the parallel I/O system to stream the (small) file in much faster.

Parallel VTK

These parallel VTK files are basically a metadata file that points to a bunch of XML type VTK files. (There is also a partitioned legacy format that basically works the same but points to legacy VTK files.) Like the PVD format, there is nothing really to it except the read the metadata file and then delegate to other readers. Again, we will make a change where this file is read collectively into a buffer.

Native Serial VTK

The native VTK files come in two types, the legacy formats and the new XML forms. Both are serial files, but the readers have some capability to divide the information into multiple processors. Although we could benefit from collective reads, we are going to punt, at least initially, on making this conversion. The format, particularly that for the XML versions, is pretty complicated with metadata and actual data dispersed through the file in varying lengths.

So although we could make improvements, we are struggling with a use case. These files are typically generated on a single machine. Parallel instances of ParaView only write out #Parallel VTK files. For this to be an issue, a user would have to write out the file on a large-memory 64 bit machine (probably with an amazing amount of processor speed) and then read it back in on a more conventional cluster. That will probably never happen. A smaller issue might be reading in a parallel file on a parallel job that is larger than the one it was written on. That would make files shared by small amounts of processes (such as 2). In this case, there are simpler solutions than trying to implement full collective reads. For example, we could offer the possibility of writing out to more files than processes and/or simply block all other processes while a file is being read.

HDF5

HDF5 is an external library. The library was designed to be read and written by parallel programs, so presumably there has been some thought taken to make the parallel access efficient. There may be some API specific to parallel reads/writes, so we should investigate to see if there is anything else we should be doing.

We are also tangentially affiliated with created the next generation HDF5 format. We should make sure that parallel I/O issues are being addressed (rather than just assume that they are).

EnSight

EnSight files work pretty much like the VTK files. There is a single metadata file (called a server of servers file) that points to a bunch of serial files. On the first pass, we will simply read the serial file using a collective read, and then allow the files it points to to be read individually.

SPCTH

The SPCTH reader has each process independently read in a unique set of files. We have a customer currently using the SPCTH reader in parallel on a large data set with no complaints. So far, we see no need to modify the reader, so will probably not address this unless we have extra time.

Raw Image Reader

The raw image reader reads in a brick of bytes (or floats or whatever) that are either dumped in a single file or in a set of slices. We can greatly improve the read performance by establishing appropriate views over the file and doing a collective read on it.

Open Issues

This section captures the issues we anticipate in implementing the proposed system.

IO Dependency on Parallel

Clearly the vtkParallelIO and vtkParallelFile depend on some of the Parallel classes. It is also clear that several of the readers currently in IO will want to take advantage of the parallel IO classes. The issue at hand is that the Parallel library currently depends on the IO library, not the other way around. There are several potentional solutions:

- Reverse library dependency

- Switch around the IO-Parallel libraries dependencies and make the compilation of the Parallel library mandatory. I don't know if any Parallel libraries actually use the IO classes, but if they do that would be a problem. It may also cause grief to people who cannot compile the Parallel library easily.

- Move parallel classes

- We could move the

vtkMultiProcessController,vtkDummyController, and other dependent classes from Parallel to Filters or Common. Of course, that further obfuscates the partitioning of classes in libraries. - Move IO classes

- We could also move the IO classes that will be optimized for reading files in parallel. There may be a lot of files to move, and it again may be difficult for someone to understand what files go where and for what reason.

- Superclasses

- One possible solution might be to make a superclass to

vtkParallelIOandvtkParallelFilethat have the parallel dependencies stripped out. But once you do that, the classes become useless for a lot of operations.

Instantiating MPI Parallel I/O

One issue with using the MPI parallel I/O library is that it may not be available even when MPI is available. Thus, there will have to be some separate C macro (VTK_USE_MPIO) that specifies if vtkMPIParallelIO exists, and applications will have to separately check that and change their behavior accordingly. That can be a pain.

Maybe it would be better if the multi process controller was able to instantiate the parallel I/O class for the user. There could be a CreateParallelIO method that would return a new instance of vtkParallelIO properly initialized or just set the global parallel I/O object (less power but also less worrying about pointers). This method would obviously be implemented in vtkMPIController. I'm not sure if there should be something in the vtkMultiProcessController superclass.

Another solution is to always present a vtkMPIParallelIO class regardless of whether MPI I/O is available. When it's not, it can just use a vtkPOSIXParallelIO under the covers.

Missing Multi Process Controller Functionality

There is some important functionality missing from vtkMultiProcessController that is useful for parallel I/O such as communicator subsetting the communicator or performing collective communications like broadcast, gather, and reduce. Instead, these things were added ad-hoc to the vtkMPIController and vtkMPICommunicator subclasses.

OK, so the only two controllers that we will actual ever use are vtkMPIController and vtkDummyController, the latter of which has basically no-ops for implementation. However, this still requires the code to do lots of checks about the controller type and wrap all this in preprocessor checks to see if MPI is even available in the first place. Thus, we should add some basic implementations to the base vtkMultiProcessController class.

This problem is not limited to parallel I/O. Lots of situations can benifit from these operations, as evidenced by the specialized methods in vtkMultiProcessController. This is also addressed in the D4 Design.

Third Party Libraries

Some file formats are simple enough to implement directly with code. Other formats were developed "in-house" as native VTK files. The rest of the formats rely on 3rd party libraries. When dealing with a 3rd party library, we are severely limited in the changes we can make on how we read the data. It is not feasible to make changes to the 3rd party library because it would soon become impossible to update to future versions of the library. Implementing and supporting readers that read the raw file data would be a nightmare.

So for many of the parallel I/O issues, we simply have to place ourselves in the developers of the third party library. However, there are a few things that we can do to better our situation.

- Enable native parallel I/O

- Assuming that we are dealing with a file format designed for parallel I/O, and the format is popular enough to justify having a ParaView reader, then it stands to reason that at least reasonably good at reading parallel I/O files. It may be just a matter of enabling those features. For example, the library may have a parallel read mode that needs to be enabled. It may also require something like an MPI communicator that we could hopefully get from the VTK parallel I/O layer.

- Minimize potential for poor access

- Sure, we're stuck with the API implementation given, but we don't have to be stupid with how we use it. There may be some easy things we can do to minimize poor I/O access patterns. For example, rather than have all processes try to read the metadata from the same file at about the same time, read it in one process and distribute it.

- Feedback

- If all else fails, complain to the 3rd party. If they are serious about having a good and well used file format, they should be motivated to fix the problems. Failing that, we could simply discourage people from using that kind of file. Users probably won't need much motivation.

Acknowledgements

Sandia is a multiprogram laboratory operated by Sandia Corporation, a Lockheed Martin Company, for the United States Department of Energy's National Nuclear Security Administration under contract DE-AC04-94AL85000.